It’s not them – it’s the approach.

You said it clearly. You kept your tone calm. You even picked the right moment. And yet nothing changed. The behaviour continued, the dynamic stayed the same, and you were left wondering whether the conversation happened at all. If feedback is supposed to be a gift, why does it so often feel like something people quietly never received?

In an earlier post I shared a planning template for preparing a feedback conversation – the what, the how, and the when. You can find it here. But preparation alone doesn’t guarantee feedback lands. This post is about what gets in the way – and what to do about it.

The brain treats feedback as a threat

There’s a reason this happens, and it has nothing to do with stubbornness. Before a single word lands rationally, the brain has already decided whether it’s safe. The amygdala – the part responsible for threat detection – fires in response to criticism much the same way it responds to physical danger. This triggers defensiveness, withdrawal, or counter-attack, none of which help change to take root. The deadlines are still being missed, the same mistake keeps happening, and the tension between team members never goes away.

This isn’t a character flaw. It’s biology. And it means you’re working against a default response no matter how well-intentioned your feedback is. Understanding this shifts the goal from delivering a message to creating the conditions where a message can actually be heard.

There is also a pattern problem. If the only time you sit down with someone is when something has gone wrong, the brain learns that quickly. A meeting request from you becomes a threat signal before a single word is spoken – which is one of the strongest arguments for regular, informal contact with your people, not just when there’s a problem.

A quick note on the feedback sandwich

You’ve probably been taught this one: start with praise, deliver the criticism, then end with praise again. It sounds considerate. It usually isn’t.

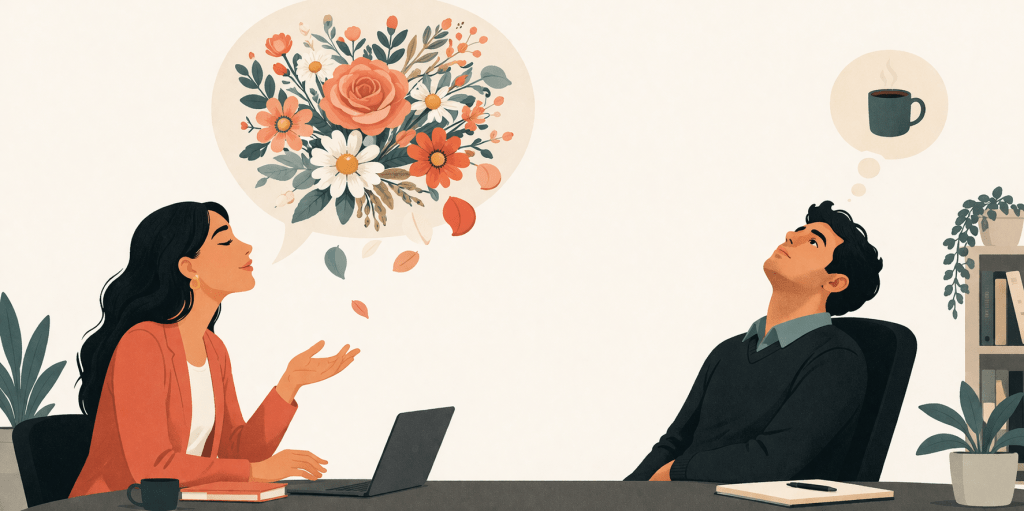

When someone opens with unexpected praise – “I just want to say you’ve been doing a really great job lately” – most people’s threat radar switches on straight away. The compliment doesn’t feel genuine; it feels like a warning. By the time the criticism arrives, the person is already braced.

There’s also a memory problem. Emotionally charged feedback sticks far more strongly than surrounding positives. The opening praise fades quickly. The closing positive barely lands. The sandwich was meant to soften the blow – it often does the opposite, and over time trains people to distrust your compliments.

What works instead: say the thing clearly and specifically, with a brief, honest opener. “I want to raise something with you because I think it matters” lands better than engineered praise – because it’s real.

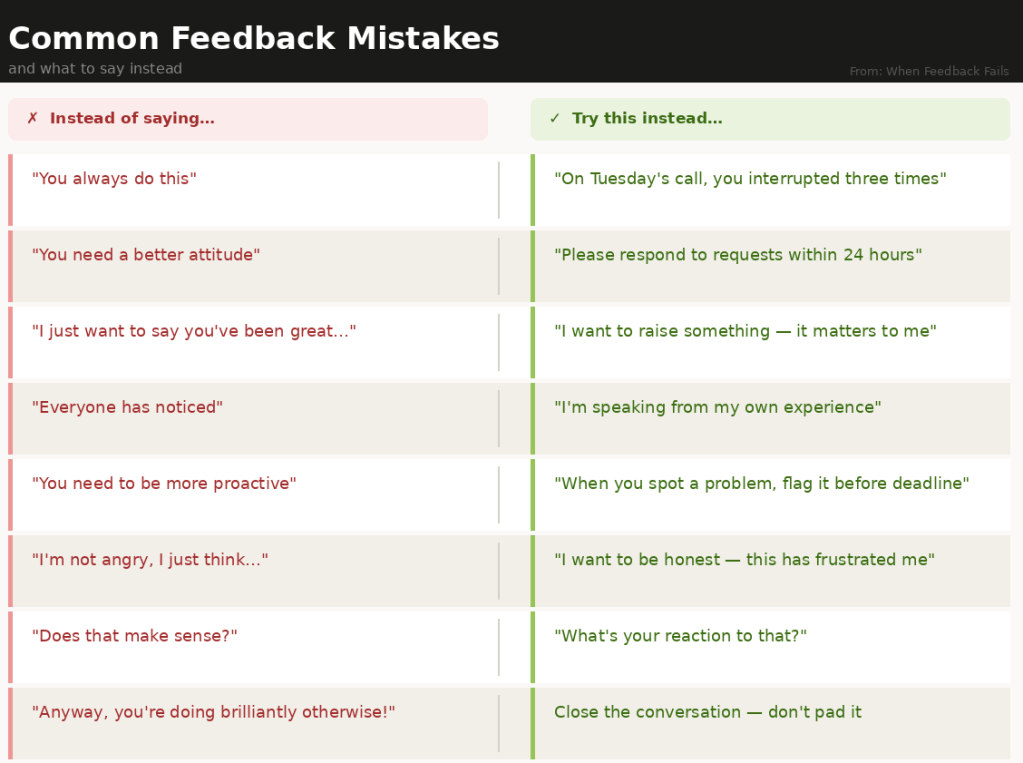

Most feedback fails before it’s even delivered

Vague observations, emotionally loaded language, and bad timing all guarantee a defensive response before you’ve finished your first sentence. The most common mistake is being clear in your head but vague when you say it out loud – telling someone they have a “bad attitude” is an interpretation, not an observation.. Feedback that can’t be described in concrete, observable terms isn’t ready to be given yet.

Regular contact matters here too. If you only speak to someone when there’s an issue, you won’t know whether something outside work – a house move, a family difficulty, a health concern – is affecting them. Feedback delivered without that awareness can land badly not because it’s wrong, but because the timing is terrible.

To help with preparation, I’ve put together a free one-page planner (Feedback that Sticks) you can fill in before any feedback conversation.

👉 [Download the “Feedback That Sticks” planner below – free]

The person giving feedback has a blind spot too

People often think that because they mean well, their words will be taken well. But that’s not usually how it works. We tend to believe our relationship is strong enough to handle tough words, forget how much our mood or frustration can sneak into our tone, and assume saying it once should fix the problem. Good feedback takes patience. It means staying calm, asking questions instead of jumping to conclusions, and being willing to admit that we might not have the full story.

One conversation rarely changes behaviour – follow-up does

And one of the biggest blind spots is thinking one conversation is enough. One talk is rarely enough to change someone’s behaviour – it’s the follow-up that makes the difference.

This is where most people drop the ball. The conversation ends, everyone feels relieved it’s over, and then… nothing more happens. No clear plan. No agreement on what should change. No time set to check back in and see how things are going.

Real change usually doesn’t happen after one serious talk. It happens when the conversation continues – when you come back to it, encourage progress, and remind each other what you’re working toward. People who keep in touch regularly find that feedback stops feeling like a big, scary event and becomes a normal conversation. And that’s when people are far more likely to listen – and actually change.

The bottom line

Feedback doesn’t fail because people refuse to grow. Most people do want to do better. It fails because we rush in without thinking it through, forget how strongly people react when they feel judged, use methods that make things worse instead of better, and then drop the subject before any real change has time to happen. The answer isn’t finding the perfect words to say. It’s building a strong relationship – staying in touch, being honestly interested in the other person, and sticking with the process until things actually improve.